The "Wayback Machine," a cornerstone of digital preservation operated by the Internet Archive, is confronting a severe survival crisis as an increasing number of major media outlets deny it access to archive their content. For over three decades, this San Francisco-based nonprofit has archived more than a billion web pages, serving as an indispensable tool for journalists, researchers, historians, and lawyers seeking to view deleted or altered online material. However, the very entities that often rely on the archive are now undermining its mission, with at least 241 news organizations across nine countries, including the UK's Guardian, the New York Times, France's Le Monde, and USA Today Co., blocking its web crawlers.

The primary driver behind this media blockade is fear of artificial intelligence (AI) companies exploiting archived content without permission or compensation. A spokesperson for the New York Times, Graham James, stated bluntly: "The issue is that Times content on the Internet Archive is being used by AI companies in violation of copyright law to directly compete with us." Data indicates that bots have massively accessed the archive to harvest media content for AI training, with Mark Graham, Director of the Wayback Machine, confirming to Wired magazine that several companies made tens of thousands of requests per second, temporarily overloading servers.

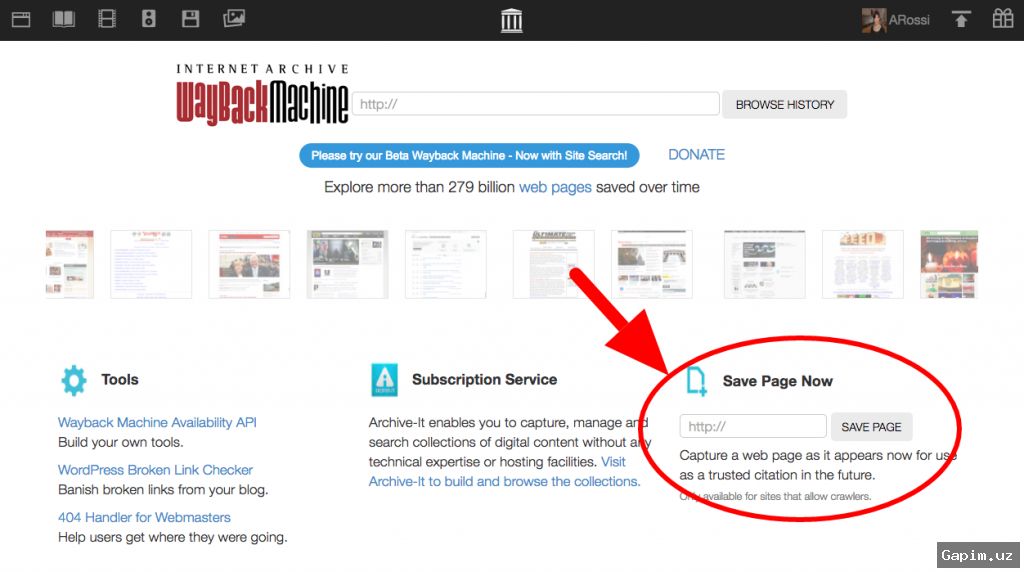

The Internet Archive, committed to an open internet ethos, has resisted excluding bots and crawlers, leading to sanctions from publishers. In response, over 100 journalists have signed a petition supporting the archive, warning in an open letter that "in a digital media landscape where articles disappear due to link rot or corporate consolidation, reporters frequently rely on the Archive's Wayback Machine to recover pages that would otherwise be lost." Graham is in talks with media outlets to restore access but cautions that "the general locking-down of more and more of the public web is impacting society's ability to understand what's going on in our world."

Media journalist Martin Fehrensen argues that the Internet Archive is the sole functional custodian of the open web, and its incapacitation would have profound implications: "Millions of Wikipedia source notes lose their roots, research on platform accountability becomes significantly more difficult, and digital evidence admissible in court ceases to exist." He proposes two solutions: a technical separation between archiving and AI training, and establishing a special legal status for web archives. In the long term, Fehrensen asserts that "web archiving has to be treated like public infrastructure, not as a single project by a San-Francisco-based NGO."

This is not the first challenge for the Internet Archive; it suffered a cyberattack in September 2024 that compromised 31 million user accounts and lost a copyright lawsuit, "Hachette v. Internet Archive," resulting in the removal of over 500,000 books from its lending program. Yet, the current threat from media blockades is structurally more severe, as it stems from numerous corporate decisions that collectively erode the archive's core objective of comprehensively preserving the public web, a situation that cannot be resolved by court rulings or software updates alone.

Source: www.dw.com